Troubleshooting Hadoop cluster

Cluster deployment in Azure with 3.2.0.20 setup fails due to Security Update

Due to May 2018 Security update in Azure, procedure to connect Virtual machine created using Azure Market place image via Remote connection(RDP) got changed. To address this the following workaround has to be done in your local machine where Cluster Manager is installed. You can deploy clusters in Azure using 3.2.0.20 Syncfusion Big Data Cluster Manager only after doing this workaround.

Change Local Group Policy in Local machine

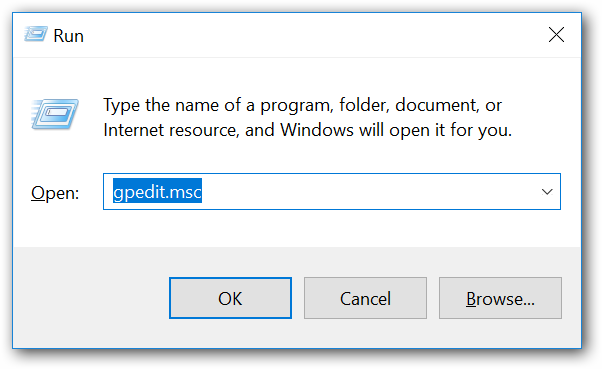

- Open Local Group Policy Editor by executing gpedit.msc in Run window.

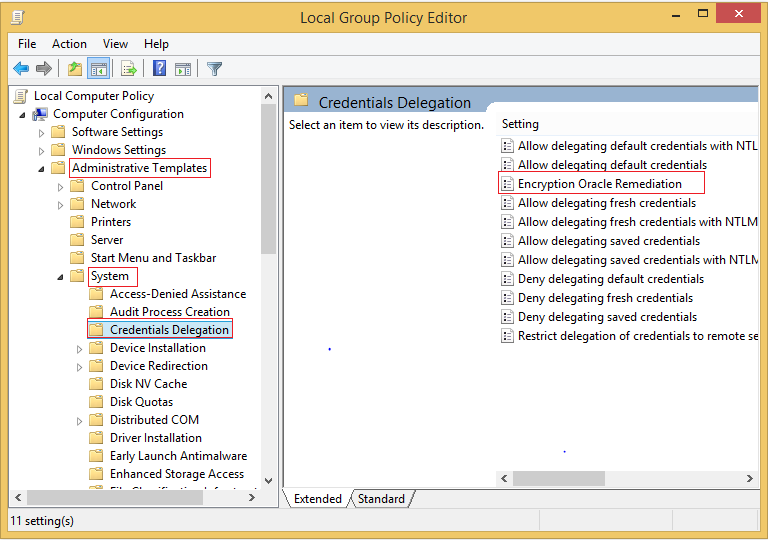

- Open Encryption Oracle Remediation setting in Credentials Delegation as shown in below image.

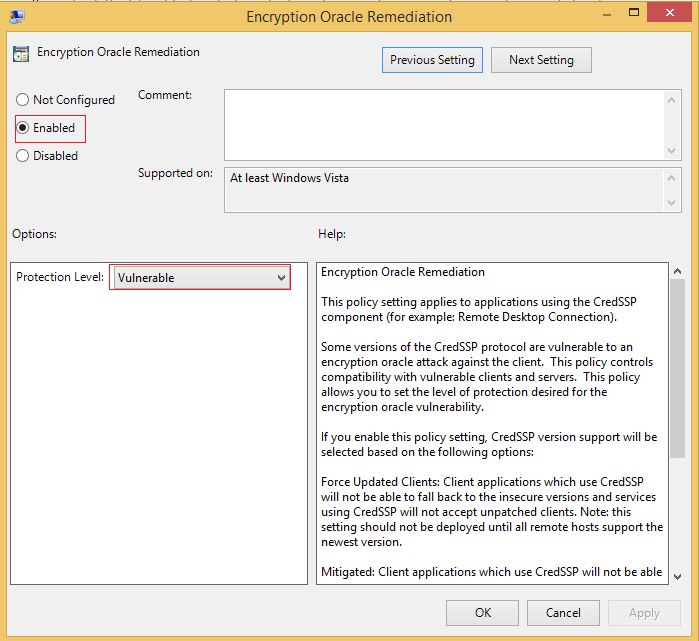

- Change the Encryption Oracle Remediation policy to Enabled, and Protection Level to Vulnerable.

For more information, please check the following URL,

https://blogs.technet.microsoft.com/mckittrick/unable-to-rdp-to-virtual-machine-credssp-encryption-oracle-remediation/

NOTE

This workaround is required for cluster deployment in Azure till next Big Data Platform release.

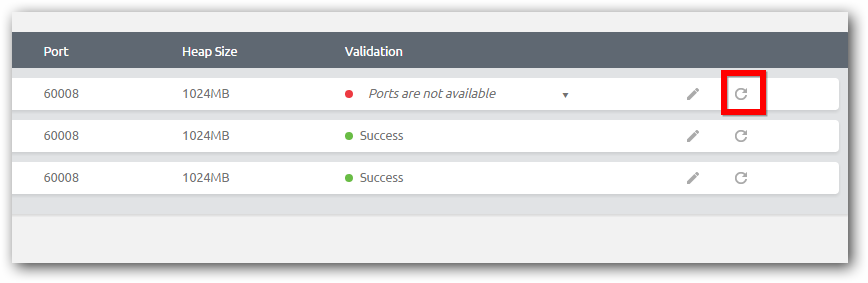

Ports are not available

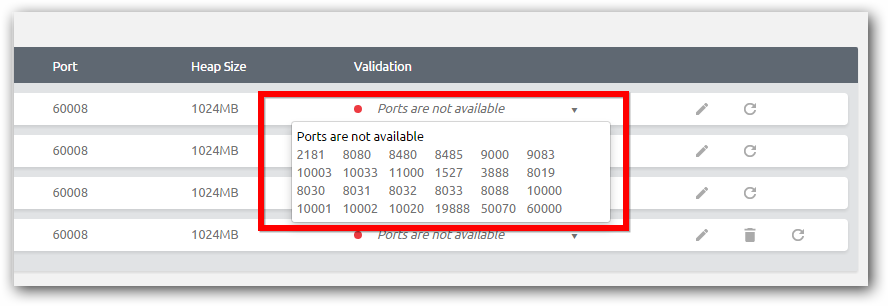

When getting ports are not available error in validation page, make sure that any of Hadoop services are not running in the node or the required ports are not being used by other program.

If the ports are used by existing Hadoop service or any other program, stop it manually and refresh the node as shown in below image. To stop Hadoop service manually, use task manager to kill all Java processes belongs to the Hadoop services.

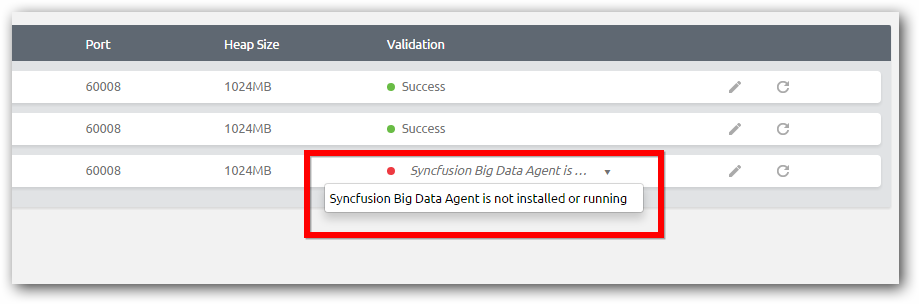

Big Data agent is not installed or running

You will get this error if the agent is not installed or running in the node or the Cluster Manager cannot communicate the agent service that will be running in a default port 60008.

To ensure this, follow the steps to ensure the agent service is running properly.

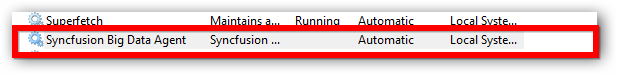

- Open the Run dialog and type services.msc

- Ensure the service “Syncfusion Big Data Agent” is running. If it’s not running start the service and use refresh option in validation of Cluster Manager to validate again.

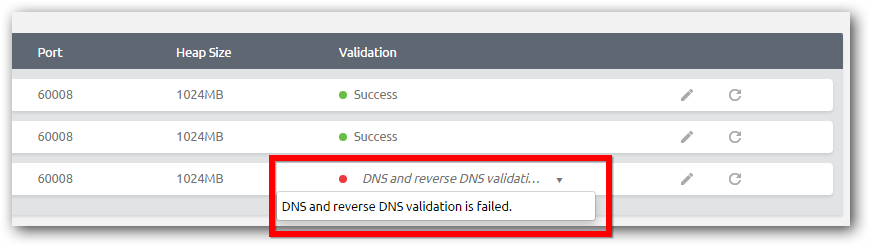

DNS and reverse DNS are not getting resolved

Hadoop services require proper network configuration so that DNS and reverse DNS will work properly for cluster nodes communication. If the network is not configured properly you will get this error.

By default Windows OS resolves host names in a same network, if you have any different network configuration like VLAN settings, you have to configure DNS server properly. You can ensure all nodes are in same network and reachable by host name.

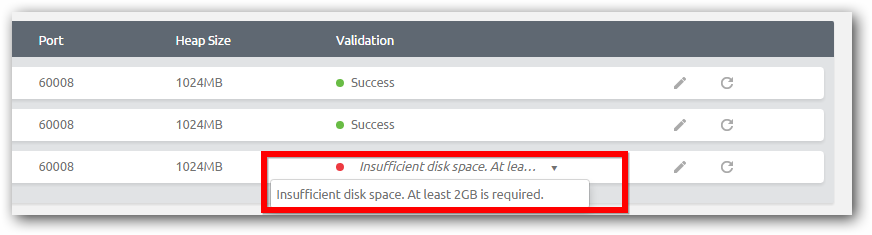

Insufficient disk space

We recommend that to have minimum 50 GB free space for each cluster nodes. At least 2 GB is needed for Hadoop packages. You will get this error in validation page. Free up required disk space and retry.

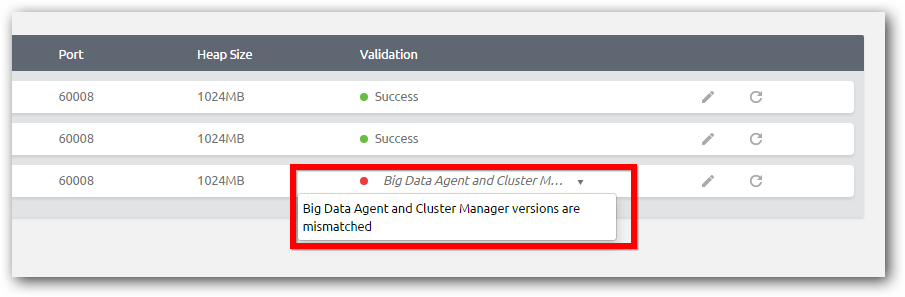

Version mismatch

Cluster Manager and Big Data Agent is each nodes need to be of same version for proper cluster management and monitoring. If the build version of Cluster Manager and Agent of any node is not same, you will get this error in cluster validation page. Ensure the build versions are same and retry.

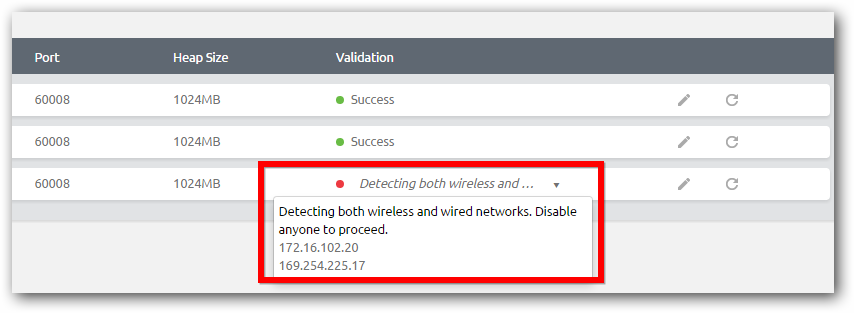

Multiple type of network

You can setup cluster either in wired or wireless network but not mixed type. If any of node is configured in both wired and wireless network, you will get this error in cluster validation page. Ensure all nodes are not having mixed type of network and retry.

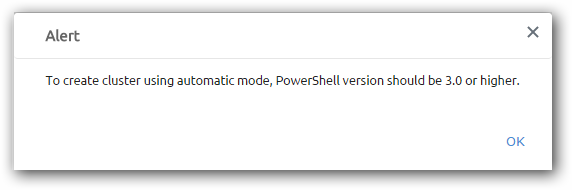

Upgrade PowerShell version

To create cluster via automatic mode, you need to have PowerShell version to be 3.0 or higher in all the nodes. You will get this error if you have lower PowerShell version.

To create secure cluster you need to have PowerShell version to be 3.0 or higher in the cluster manager installed machine.

From Windows 8 and Windows Server 2012, by default it has higher version. In case of using Windows 7 or Windows Server 2008 machines, you need to upgrade the PowerShell to higher version.

| OS | Default PowerShell version | Packages need to be installed in the same order |

|---|---|---|

| Windows 7 | Power shell 2.0 |

Windows 7 service pack Windows Management Framework 3.0 |

| Windows Server 2008 R2 | Power shell 2.0 |

Windows server 2008 R2 service pack 1

Windows Management Framework 3.0 |

Pseudo node cluster - HBase services fails to run

In Pseudo node cluster if HMaster and HRegionServer services are not running, please ensure the case(Upper/Lower) specified for host name in etc/hosts file and in the property ‘hbase.zookeeper.quorum’ exist in hbase-site.xml are same. If the case is different, internal Zookeeper service of HBase fails to start which leads to HBase services to shutdown.

E.g. In the following case, internal Zookeeper fail to start,

| Host name entry in "C:\Windows\System32\drivers\etc\hosts" | 172.16.150.3 machine1 |

| Value of property 'hbase.zookeeper.quorum' in hbase-site.xml | Machine1 |

Cluster Manager UI is not rendering properly in Internet Explorer

Make sure there is no security enhancement enabled to restrict JavaScript and compatibility view is not enabled by default for internally hosted sites in IE.

Collect Logs does not gather any logs and Configuration page fails to load

If the Cluster Manager is installed in a machine that is not included in cluster nodes, then features Collect Logs and Configuration might not work properly.

The root cause would be Cluster Manager machine is not reachable by the cluster nodes to upload the log files and configuration files. This can be resolved by adding the host information of cluster manager in “etc/hosts” of all the nodes that belong to the cluster.

Oozie server not running on Ubuntu 16.04

The latest version of JDK 7 does not have Timezone data on Ubuntu 16.04 version. Due to this while starting Oozie server, it will get failed with a message like “Invalid Time Zone: UTC”.

Solution:

We need to update TimeZone data for JDK 7 on Ubuntu 16.04 by executing the following commands,

sudo apt-add-repository ppa:justinludwig/tzdata

sudo apt-get update

sudo apt-get install tzdata-java*

Update Oozie share lib jar files

If any changes done in Oozie’s share lib folder(/user/share/lib/ on your HDFS) like adding a new jar file, it does not get reflected in Oozie server unless the Oozie server is restarted.

Please find following command to update Oozie share lib folder.

Windows:

..\SDK\Oozie\bin> oozie.cmd admin -sharelibupdate -oozie http://< host >:11000/oozie

Linux:

../SDK/Oozie/bin$ ./oozie admin -sharelibupdate -oozie http://< host >:11000/oozie